Crawl errors and crawl budget tend to get overlooked, but they are incredibly important parts of SEO. They can have a great impact on how search engines view your site, and subsequently the rank of your site on the search engines. This content will explore the correlation between SEO errors that stem from the crawling processes, the crawl budget, and the website rating. Additionally, it will explain how to manipulate these best around to improve your results.

Mistakes That Happen When Searching In SEO

A search engine crawling error errors that arise when bots of a search engine like Googlebot have issues trying to reach and crawl a specific webpage. These errors can severely restrict the volume of a website’s web pages offered in the search results with crucially important content unindexed.

Two primary categories of crawl errors exist:

Site-Level Errors: These issues affect your entire website. Examples include DNS errors, server timeouts, or misconfigured robots.txt files. If Google cannot access your site at a foundational level, it won’t crawl your pages efficiently.

URL-Level Errors: These pertain to specific URLs on your website. Common examples are pages that result in 404 (Not Found), 403 (Forbidden), and 500 (Server Error). Even a couple of these errors can cause a dramatic reduction in the SEO ranking.

How Can You Find Crawl Errors

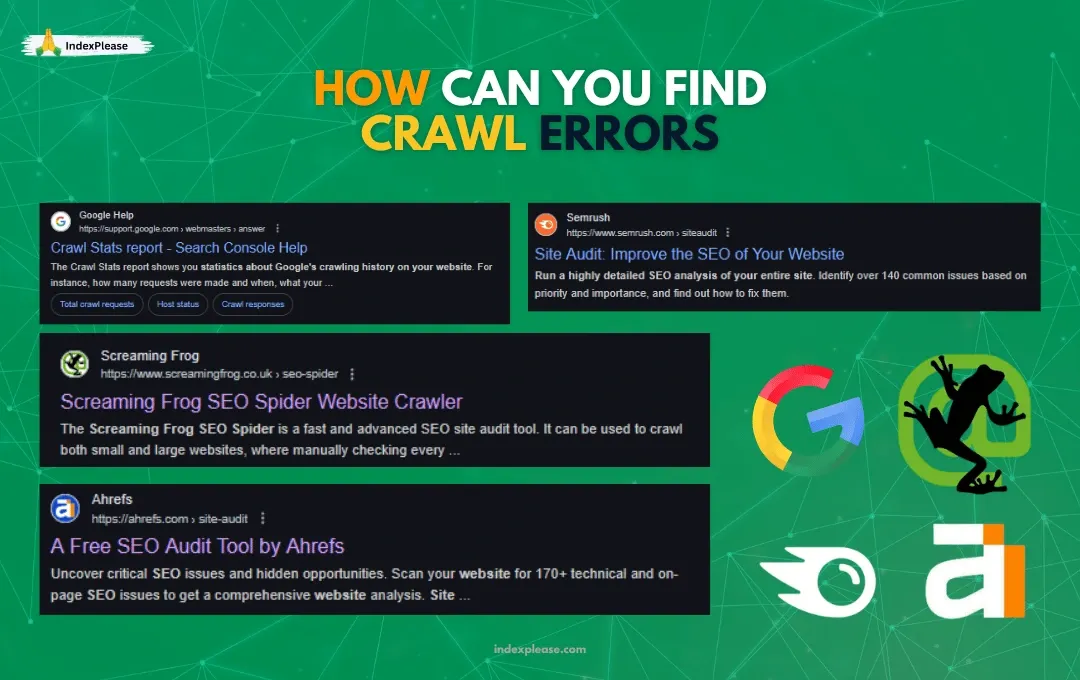

Tools that allow for crawl errors detection include:

Google Search Console, under “Crawl Stats” and “Indexing”

These platforms pinpoint broken links, missing server resources, or other issues that hinder smooth crawling.

What is a Crawl Budget, and Why is it Important?

Crawl budget is the term used to define the amount of pages that a search engine is able to crawl and index on your website in a specific duration of time. The crawl budget is affected by the general health, structure and authority of your website. If a website’s crawl budget is not managed properly, it can lead to crucial pages being skipped or skipped over entirely.

Factors That Influence Crawl Budget

Site Size: Larger websites typically require more crawl budget to account for their volume of pages.

Content Quality: Thin, duplicate, or irrelevant content may lead Google to waste crawl budget on unimportant pages.

Page Speed: Slow pages are often neglected as the bots will not go through them in detail, therefore reducing the overall site efficiency.

Crawl Structure: Bots may not be able to discover important pages because of not so optimally created internal links.

The Connection Between SEO Crawling Errors and Rankings

Now, here’s the million-dollar question: do crawl errors and crawl budget directly affect rankings? The answer is yes—but indirectly.

Crawl Errors’ Impact on Rankings

When critical pages cannot be crawled or indexed due to errors, they won’t appear in search results. This translates into:

Reduced organic traffic.

Missed opportunities to target keywords.

A fragmented user experience when visitors encounter broken links.

For instance, if your most profitable landing pages result in 404 errors, your conversion rates and revenue will suffer.

Crawl Budget and Rankings

Google allocates crawl budgets based on your website’s authority and perceived value. If bots waste their budget crawling irrelevant or duplicate pages, your most valuable content might be skipped. Consequently, rankings for competitive keywords could plummet.

How to Minimize SEO Crawling Errors

The good news? Most crawl errors are preventable with proper maintenance. Follow these strategies to keep your website error-free:

Audit Your Website Regularly

You should be running through audits at least once in a month with Screaming Frog or Google Search Console. Problems such as 404 errors should be taken care of first, along with adding metadata and fixing internal links.

Redirects to Fix 404 Errors

301 redirects ensure that users and search engines are taken to the appropriate page. As long as the right redirection strategies are put in place, there won’t be a reduction in link equity.

Edit Robots.txt

You can easily prevent bots from accessing unimportant web pages like staging pages or even login portals by editing the robots.txt. Just remember that important content must also not be hidden.

Troubleshoot Server Problems

Make sure that your hosting environment is strong enough and can support your website. If the server is slow or frequently goes offline, this will annoy users as well as bots, hurting your ranking.

Enhance the Speed at Which Pages Are Presented

A website that is quicker increases user satisfaction as well as bot accessibility. You can achieve this by compressing pictures, optimizing code, or utilizing browser caching.

Methods for Increasing Crawl Budget Efficiency

It’s necessary to lessen crawl errors, but for the purpose of your marketing plan, maximizing crawl budget is vital too. Keep the following ideas in mind:

Focus On Significant Pages First

Pages that are no longer relevant or pages which do not aid your SEO techniques should have a noindex command. This will ensure that search engines focus on what is important.

Have A Clear And Logical Arrangement Of Your Website Pages

Sort the blog content under the category of the page. For example, the blog posts should be grouped under certain categories and linked with one another. This will enhance crawlability.

Reduce The Amount Of Duplicate Content

Duplicate pages should be reduced and merged with canonical tags so that bots only crawl the correct ones. It is unwise to allocate crawl budgets on pages with duplicate content.

Update The XML Sitemaps Regularly

Send regularly cleaned XML sitemaps to Google Search Console. This ensures that the bots will have the correct structure of your website and enable them to crawl the priority pages first.

Check The Crawl Statistics

Analyze your crawl statistics with the help of Google Search Console in order to notice as well as understand the increases or decreases in crawl rate, which may indicate a problem.

SEO Crawling Errors: Why Fixing Them Is Always Important

SEO crawling errors negatively affect ranking on a search engine as well as the user experience a website offers, the site’s credibility, and trust in the brand itself. If a website has too many issues, it will give a bad online impression to both visitors and search engines. Fixing these issues right away shows professionalism and a commitment to quality.

As search engines focus more on efficiency, it’s crucial for websites to control crawl errors and budgets. If search engines can’t reach your pages, your rankings and visibility will suffer. That’s where IndexPlease comes in. We offer powerful indexing solutions that let you discover which of your important pages are crawled or indexed, and which ones are being ignored. Simply enable IndexPlease to fix your website’s structure, broken links, and trouble spots, and you’ll spend less time error troubleshooting and more time completing essential tasks. Whether you are dealing with 404s, slow crawling, or wasted crawl budget, every important piece of content will always be visible where it matters— on search engines!

FAQs

What is the distinction between crawl errors and crawl budget?

Crawl errors are issues that prevent a search engine from retrieving and indexing a website or web page, whereas budget refers to the number of pages that bots can crawl within a given time frame. Both terms affect SEO, but in different ways.

How do I check crawl errors?

Crawl errors can be checked and resolved using Google Search Console, Screaming Frog, or Ahrefs Site Audit.

Can crawl budget set limitations on my website’s ranking potential?

If a website has a crawl budget that is spent on irrelevant or duplicate pages, it can prevent high ranking content from being indexed. As a result, the website might lose pages in lower rank positions.

How do I reduce/abolish?

Improve your crawl budget by refining your site’s structure and organization. Use canonical tags, and sitemaps, and remove duplicate or low-value pages to make the most impact.

Every website has a crawl budget, but how it is allocated is specific to each website. The allocation is determined by the website’s authority, structure, and health.

Are crawl errors a problem for eCommerce websites?

Catastrophic website structure and frequent URL changes make eCommerce stores susceptible to crawling errors. These issues can be mitigated by conducting regular maintenance.

Conclusion

Crawl errors and crawl budgets are crucial pillars of efficient SEO. While they do not directly affect ranking, they have a profound secondary effect on visibility, traffic, and usability. Addressing crawling errors and improving site structure and managing the crawl budget appropriately will ensure a higher ranking, better organic traffic, and user experience. A website that is properly maintained gives the business the best chance of success in SEO.